Difference between revisions of "Strategic Initiatives TSC Dashboard"

WoodyBeeler (talk | contribs) |

|||

| (45 intermediate revisions by 4 users not shown) | |||

| Line 8: | Line 8: | ||

From those, the TSC accepted volunteers to draft metrics with which to measure the TSC criteria. | From those, the TSC accepted volunteers to draft metrics with which to measure the TSC criteria. | ||

| − | + | ||

| − | *[http://gforge.hl7.org/gf/ | + | <!-- --> |

| − | * | + | |

| − | + | ===Industry responsiveness and easier implementation=== | |

| − | + | *Draft Strategic Dashboard criteria metrics recommendations from Mead for: [http://gforge.hl7.org/gf/download/trackeritem/2060/9460/20120513MeadDeliverable.docx Industry responsiveness and easier implementation] | |

| − | + | *Transition to Mead | |

| − | + | *Staff support: Dave Hamill | |

| − | + | <!-- --> | |

| − | + | <!-- --> | |

| − | + | ===Requirements traceability and cross-artifact consistency=== | |

| − | + | ====Requirements traceability==== | |

| − | + | - Tied to HL7 roll out of SAIF ECCF. | |

| − | + | *measure is the number of milestones completed: | |

| − | + | **Informative SAIF Canonical document published | |

| − | + | **DSTU SAIF Canonical document published | |

| − | + | **Normative SAIF Canonical document published | |

| − | * | + | **Peer Reviewed HL7 SAIF IG including ECCF chapter and draft SAIF Artifact Definitions |

| − | **** | + | **DSTU HL7 SAIF IG published |

| − | + | **Normative HL7 SAIF IG published | |

| − | + | **SAIF Architecture Program experimental phase of HL7 SAIF ECCF implementation completed | |

| − | + | **SAIF AP trial use phase of HL7 SAIF ECCF implementation completed | |

| − | * | + | **HL7 SAIF ECCF rolled to all work groups working on applicable standards (V3 primarily) |

| − | + | <!-- --> | |

| − | + | ====Cross-artifact consistency==== | |

| − | + | ''(per TSC decision [[2012-03-26 TSC Call Agenda|2012-03-26]])'' | |

| + | *'''Survey of newly published V3 Standards''' via the [http://www.hl7.org/permalink/?PublicationRequestTemplate publication request form] | ||

| + | **Key - Not including Implementation Guides ''(what about DAMs or foundational standards which define no artifacts?)'' | ||

| + | ***Green: All new standards have one or more of the following checked; | ||

| + | ***Yellow: 1-2 standards have none of the following checked; | ||

| + | ***Red: 3 or more standards have none of the following checked. | ||

| + | **Standard uses CMETs from HL7-managed CMETs in COCT, POCP (Common Product) and other domains | ||

| + | **Standard uses harmonized design patterns (as defined through RIM Pattern harmonization process) | ||

| + | **Standard is consistent with common Domain Models including Clinical Statement, Common Product Model and "TermInfo" | ||

| + | *'''Harmonization Participation''': Percent of Work groups in FTSD, SSDSD and DESD participating in RIM/Vocab/Pattern Harmonization meetings, calculated as a 3 trimester moving average - Green of 75% or better participation. Yellow 50-74%. 0-49 is red. | ||

| + | *Staff Support: Lynn Laakso | ||

| + | <!-- --> | ||

| + | <!-- --> | ||

| + | |||

| + | ===Product Quality=== | ||

| + | *'''Product Development Effectiveness''' Strategic Dashboard criteria metrics for average number of ballot cycles necessary to pass a normative standard. Calculated as a cycle average based on publication requests. Includes: | ||

| + | *:(Note: This metric uses "ballots" rather than ballot cycles because more complex ballots frequently require two-cycles to complete reconciliation and prepare for a subsequent ballot.) | ||

| + | *#Projects at risk of becoming stale 2 cycles after last ballot | ||

| + | *#Two cycles since last ballot but not having posted a reconciliation or request for publication for normative edition | ||

| + | *#items going into third or higher round of balloting for a normative specification; | ||

| + | *:(Note: This flags concern while the process is ongoing; it is not necessarily a measure of quality once the document it has finished balloting). | ||

| + | **Key | ||

***Green: 1-2 ballots | ***Green: 1-2 ballots | ||

***Yellow: 3-4 ballots | ***Yellow: 3-4 ballots | ||

***Red: 5+ ballots | ***Red: 5+ ballots | ||

| − | *Publishing will create a "Ballot Quality" metric that creates a green/yellow/red result by amalgamating specific elements such as: | + | **Results (T1 = Jan-May, T2 = May-Sep, T3 = Sep-Jan) - |

| − | *#ability to create schemas that validate (metric of model correctness), | + | ***2014T1 2014Jan-May -> 1.8 = Green |

| − | *#the presence of class- and attribute-level descriptions in a static model (metric of robust documentation) | + | ***2013T3 2013Sep-2014Jan -> 1.0 = Green |

| − | *# | + | ***2013T2 2013May-Sep -> 1.4 = Green; [http://gforge.hl7.org/gf/download/docmanfileversion/7511/10886/Cycles_to_Normative_by_wg_with_ANSIDate.xlsx 2013Sep Release] |

| − | * | + | ***2013T1 2013Jan-May average -> 1.7 = Green; [http://gforge.hl7.org/gf/download/frsrelease/995/10408/Cycles_to_Normative_by_wg_with_ANSIDate.xlsx 2013May Release] |

| − | *# | + | ***2012T3 2012Sep-2013Jan average -> #DIV/0! (no ANSI publications this period); [http://gforge.hl7.org/gf/download/frsrelease/923/9596/Cycles_to_Normative_by_wg_with_ANSIDate.xlsx 2012Sep Release] includes trend on the average of cycles to reach normative status and ANSI publication. |

| − | * | + | ***2012T2 May-Sep average -> 1.33 = Green |

| − | * | + | ***2012T2 Jan-May average -> 2.71 = Yellow ? |

| + | <br/> | ||

| + | *Publishing will also create a "'''Product Ballot Quality'''" metric (at the level of the product, but generated for each Ballot) that creates a green/yellow/red result by amalgamating specific elements such as: | ||

| + | **Content quality, including: | ||

| + | **#ability to create schemas that validate (metric of model correctness) ''[where failure could have been prevented by the product developer]'', | ||

| + | **#the presence of class- and attribute-level descriptions in a static model (metric of robust documentation) | ||

| + | *Staff Support: Lynn Laakso | ||

| + | <!-- --> | ||

| + | <!-- --> | ||

| + | |||

| + | ===WGM Effectiveness=== | ||

| + | * [http://gforge.hl7.org/gf/project/tsc/tracker/?action=TrackerItemEdit&tracker_item_id=2057&start=0 Tracker #2057] was created to document the Strategic Dashboard criteria metrics for: '''WGM Effectiveness''' | ||

| + | **Draft Criteria | ||

| + | ***% of WG’s attending | ||

| + | ***% of WG’s who get agenda posted in appropriate timeframe | ||

| + | ***% of WG’s who achieve quorum at WGM | ||

| + | ***Number of US-based attendees | ||

| + | ***Number of International attendees | ||

| + | ***Number of non-member attendees | ||

| + | ***Profit or Loss | ||

| + | ***Nbr of FTAs | ||

| + | ***Nbr of Tutorials Offered | ||

| + | ***Nbr Students Attending Tutorials | ||

| + | ***Nbr of Quarters that WG's had agenda for; but did not make quorum | ||

| + | **For US-Based Locations: one set of %’s for success | ||

| + | **For International Locations: a different set of %’s for success | ||

| + | **Metrics may also be based on each WG's prior numbers | ||

| + | *Staff Support: Dave Hamill | ||

| + | *TSC Liaison: Jean Duteau | ||

| + | |||

| + | ===Dashboard=== | ||

| + | {|width=100% cellspacing=0 cellpadding=2 border="1" | ||

| + | |- | ||

| + | |bgcolor="#ccaaff" align=center width="25%"| Industry responsiveness and easier implementation | ||

| + | |bgcolor="#ffffaa" align=center width="25%"| Requirements Traceability<br/>and cross-artifact consistency | ||

| + | |bgcolor="#ddffaa" align=center| Product Quality | ||

| + | |bgcolor="#ccaaff" align=center width="25%"| WGM Effectiveness | ||

| + | |- | ||

| + | |valign=top| | ||

| + | '''SI Project assessment''' | ||

| + | * TBD | ||

| + | |||

| + | |valign=top| | ||

| + | '''Requirements traceability''' | ||

| + | |||

| + | * [[Media:SI_RequirementsTraceability_thermometer.png]] | ||

| + | *[[Image:SI_RequirementsTraceability_thermometer.png|250px|example for dashboard]] | ||

| + | |||

| + | |valign=top bgcolor="#ddffaa"| | ||

| + | '''Product Development Effectiveness ''' | ||

| + | *2014T1 Sep-Jan average -> 1.8 = Green | ||

| + | *2013T3 Sep-Jan average -> 1.0 = Green | ||

| + | *2013T2 May-Sep average -> 1.4 = Green | ||

| + | *2013T1 Jan-May average -> 1.7 = Green | ||

| + | *2012T3 2012Sep-2013Jan average -> #DIV/0! (no ANSI publications this period) | ||

| + | *2012T2 May-Sep average -> 1.33 = Green | ||

| + | *2012T1 Jan-May average -> 2.71 = Yellow ? | ||

| + | Key: | ||

| + | *Green: 1-2 ballots | ||

| + | *Yellow: 3-4 ballots | ||

| + | *Red: 5+ ballots | ||

| + | |||

| + | |||

| + | |valign=top| | ||

| + | '''WGM Effectiveness''' | ||

| + | |||

| + | [http://gforge.hl7.org/gf/download/docmanfileversion/7698/11223/WGMEffectiveness_20131203.xls Metrics Through Sept 2013 WGM] | ||

| + | |||

| + | |||

| + | |- | ||

| + | |||

| + | |valign=top rowspan=2| | ||

| + | '''TBD''' | ||

| + | |||

| + | |valign=top bgcolor="#ffaabb"| | ||

| + | '''Cross-Artifact Consistency''' | ||

| + | *2014May: RED (6 standards had none checked of 10 eligible for this measure) | ||

| + | *2014Jan: RED (5 standards had none checked of 5 eligible for this measure) | ||

| + | *2013Sep: RED (3 standards had none checked of 9 eligible for this measure) | ||

| + | *2013May: RED (4 standards had none checked of 8 eligible for this measure) | ||

| + | |||

| + | |||

| + | |valign=top rowspan=2| | ||

| + | '''Product Ballot Quality''' | ||

| + | |||

| + | |valign=top rowspan=2| | ||

| + | '''WGM Effectiveness - International meetings''' | ||

| + | * TBD | ||

| + | |||

| + | |- | ||

| + | |bgcolor="#ffffaa"| | ||

| + | Harmonization participation: | ||

| + | *64.65% rolling average ending 2014T1 = YELLOW | ||

| + | *51.25% rolling average ending 2013T3 = YELLOW | ||

| + | *46.28% rolling average ending 2013T2 = RED, - almost there... | ||

| + | *46.28% rolling average 2013T2 (May-Sep) to 2012T3 (2012Sep-2013Jan)= RED | ||

| + | *37.79% rolling average 2013T1 (Jan-May) to 2012T2 (May-Sep)= RED | ||

| + | *44.12% rolling average 2012T3 (2012Sep-2013Jan) to 2012T1 (Jan-May)= RED | ||

| + | |||

| + | |- | ||

| + | |||

| + | |} | ||

Latest revision as of 15:52, 25 April 2014

Contents

HL7 Strategic Initiatives Dashboard Project

Project Scope: The scope of this project to implement a dashboard showing the TSC progress on implementing the HL7 Strategic Initiatives that the TSC is responsible for. The HL7 Board of Directors has a process for maintaining the HL7 Strategic Initiatives. The TSC is responsible for implementing a significant number of those initiatives. As part of developing a dashboard, the TSC will need to develop practical criteria for measuring progress in implementing the strategic initiatives. There are two aspects to the project. First is the process of standing up the dashboard itself. This may end up being a discrete project handed off to Electronic Services and HQ. That dashboard would track progress towards all of HL7's strategic initiatives (not just those the TSC is responsible for managing.) The second part of this project will be focused on the Strategic Initiatives for which the TSC is responsible.

In discussion at 2011-09-10_TSC_WGM_Agenda in San Diego, a RASCI Chart showing which criteria under the Strategic Initiatives the TSC would take responsibility for.

From those, the TSC accepted volunteers to draft metrics with which to measure the TSC criteria.

Industry responsiveness and easier implementation

- Draft Strategic Dashboard criteria metrics recommendations from Mead for: Industry responsiveness and easier implementation

- Transition to Mead

- Staff support: Dave Hamill

Requirements traceability and cross-artifact consistency

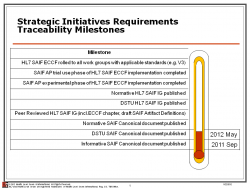

Requirements traceability

- Tied to HL7 roll out of SAIF ECCF.

- measure is the number of milestones completed:

- Informative SAIF Canonical document published

- DSTU SAIF Canonical document published

- Normative SAIF Canonical document published

- Peer Reviewed HL7 SAIF IG including ECCF chapter and draft SAIF Artifact Definitions

- DSTU HL7 SAIF IG published

- Normative HL7 SAIF IG published

- SAIF Architecture Program experimental phase of HL7 SAIF ECCF implementation completed

- SAIF AP trial use phase of HL7 SAIF ECCF implementation completed

- HL7 SAIF ECCF rolled to all work groups working on applicable standards (V3 primarily)

Cross-artifact consistency

(per TSC decision 2012-03-26)

- Survey of newly published V3 Standards via the publication request form

- Key - Not including Implementation Guides (what about DAMs or foundational standards which define no artifacts?)

- Green: All new standards have one or more of the following checked;

- Yellow: 1-2 standards have none of the following checked;

- Red: 3 or more standards have none of the following checked.

- Standard uses CMETs from HL7-managed CMETs in COCT, POCP (Common Product) and other domains

- Standard uses harmonized design patterns (as defined through RIM Pattern harmonization process)

- Standard is consistent with common Domain Models including Clinical Statement, Common Product Model and "TermInfo"

- Key - Not including Implementation Guides (what about DAMs or foundational standards which define no artifacts?)

- Harmonization Participation: Percent of Work groups in FTSD, SSDSD and DESD participating in RIM/Vocab/Pattern Harmonization meetings, calculated as a 3 trimester moving average - Green of 75% or better participation. Yellow 50-74%. 0-49 is red.

- Staff Support: Lynn Laakso

Product Quality

- Product Development Effectiveness Strategic Dashboard criteria metrics for average number of ballot cycles necessary to pass a normative standard. Calculated as a cycle average based on publication requests. Includes:

- (Note: This metric uses "ballots" rather than ballot cycles because more complex ballots frequently require two-cycles to complete reconciliation and prepare for a subsequent ballot.)

- Projects at risk of becoming stale 2 cycles after last ballot

- Two cycles since last ballot but not having posted a reconciliation or request for publication for normative edition

- items going into third or higher round of balloting for a normative specification;

- (Note: This flags concern while the process is ongoing; it is not necessarily a measure of quality once the document it has finished balloting).

- Key

- Green: 1-2 ballots

- Yellow: 3-4 ballots

- Red: 5+ ballots

- Results (T1 = Jan-May, T2 = May-Sep, T3 = Sep-Jan) -

- 2014T1 2014Jan-May -> 1.8 = Green

- 2013T3 2013Sep-2014Jan -> 1.0 = Green

- 2013T2 2013May-Sep -> 1.4 = Green; 2013Sep Release

- 2013T1 2013Jan-May average -> 1.7 = Green; 2013May Release

- 2012T3 2012Sep-2013Jan average -> #DIV/0! (no ANSI publications this period); 2012Sep Release includes trend on the average of cycles to reach normative status and ANSI publication.

- 2012T2 May-Sep average -> 1.33 = Green

- 2012T2 Jan-May average -> 2.71 = Yellow ?

- Publishing will also create a "Product Ballot Quality" metric (at the level of the product, but generated for each Ballot) that creates a green/yellow/red result by amalgamating specific elements such as:

- Content quality, including:

- ability to create schemas that validate (metric of model correctness) [where failure could have been prevented by the product developer],

- the presence of class- and attribute-level descriptions in a static model (metric of robust documentation)

- Content quality, including:

- Staff Support: Lynn Laakso

WGM Effectiveness

- Tracker #2057 was created to document the Strategic Dashboard criteria metrics for: WGM Effectiveness

- Draft Criteria

- % of WG’s attending

- % of WG’s who get agenda posted in appropriate timeframe

- % of WG’s who achieve quorum at WGM

- Number of US-based attendees

- Number of International attendees

- Number of non-member attendees

- Profit or Loss

- Nbr of FTAs

- Nbr of Tutorials Offered

- Nbr Students Attending Tutorials

- Nbr of Quarters that WG's had agenda for; but did not make quorum

- For US-Based Locations: one set of %’s for success

- For International Locations: a different set of %’s for success

- Metrics may also be based on each WG's prior numbers

- Draft Criteria

- Staff Support: Dave Hamill

- TSC Liaison: Jean Duteau

Dashboard

| Industry responsiveness and easier implementation | Requirements Traceability and cross-artifact consistency |

Product Quality | WGM Effectiveness |

|

SI Project assessment

|

Requirements traceability |

Product Development Effectiveness

Key:

|

WGM Effectiveness

|

|

TBD |

Cross-Artifact Consistency

|

Product Ballot Quality |

WGM Effectiveness - International meetings

|

|

Harmonization participation:

|